Try it out here: https://github.com/XiangpengHao/parquet-linter

> cargo install parquet-linter-cli

> parquet-linter data.parquet

warning low-compression-ratio

--> column[2]("pid")

aggregated compression ratio is 1.09 (SNAPPY) across 1/1 row groups; data is nearly incompressible

fix: set column pid compression uncompressed

warning dictionary-encoding-cardinality

--> column[1]("ts_ns")

dictionary data pages fell back to PLAIN in 1/1 row groups; estimated cardinality is moderate (~132048 distinct / 380089 non-null = 35%), dictionary page size may be too small

fix: set column ts_ns dictionary_page_size_limit 2097152Parquet is a spec, not an implementation

When we talk about Parquet, we often refer to one of its readers or writers, likely the Java, C++ (used in PyArrow), Rust, or DuckDB implementation.

When we complain that Parquet is not great for our use case, we’re actually complaining that the Parquet file generated by a specific implementation with a specific set of parameters is not good enough.

As a spec, Parquet is flexible; it doesn’t really restrict much on how the data is stored, and it allows very fine-grained configuration on almost every aspect of the file format. There’s no single best “Parquet” for all use cases.

Configuring the right parameters for the right use case is not easy. It often requires a deep understanding of the cascading effects of encodings, compression, layouts, data types, and page/row group sizes. It’s likely that no single person on the planet can get it right on the first try.

We need different kinds of Parquet

You might think, why not just use the best practices? Because it’s not easy to define what is “best”.

Use case 1: long term archival

Probably the most common use case for Parquet is to archive data for long-term storage. In this use case, we don’t care about decoding speed; we want the data to be as compact as possible. Smaller Parquet files means lower S3 bill.

Use case 2: fast query performance

Parquet is the backbone of lakehouses like Iceberg, Delta Lake, and DuckLake. In such use cases, we want to maximize query performance. As a recent study shows (Liquid Cache), decoding Parquet takes a significant amount of query time. To minimize decoding time, we want to use lightweight compression, or even no compression at all (as in Databricks’ local cache).

Use case 3: ecosystem compatibility

Parquet is an open-direct-access format; Spark, Trino, DuckDB, DataFusion, etc., all support it. But not all readers support the same set of Parquet features. In this use case, we’d like to use the most conservative set of Parquet features that are supported by all readers.

Vision: Parquet Linter

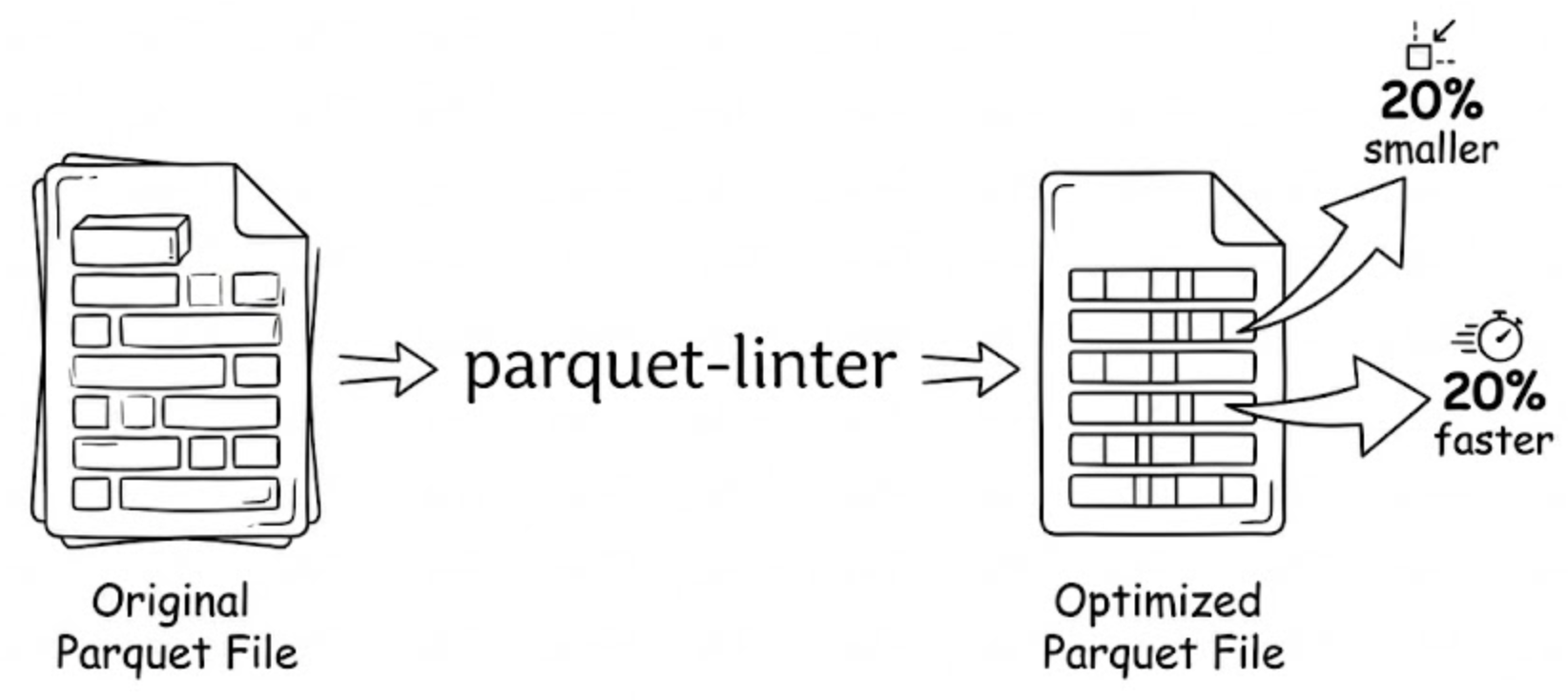

Introducing Parquet Linter: https://github.com/XiangpengHao/parquet-linter, a tool to check compression, decompression, and compatibility issues in your Parquet files.

I envision three levels of Parquet Linter.

Level 1: pure gain

In this level, Parquet Linter checks for issues that make Parquet files worse for all use cases and suggests better configurations.

For example, if a column’s compression ratio is 1.0 using a compression algorithm (this happens surprisingly often!), then we should just remove the compression because it is pure overhead.

Level 2: trade-offs guided

In this level, we allow the user to specify the trade-offs they want to prioritize, e.g., choosing compression ratio over decoding speed. Then Parquet Linter checks the configuration and suggests a better set of configurations that aligns with the user’s trade-offs.

Level 3: towards intelligence

The Parquet Linter is essentially a model; it takes workload features as input and outputs a set of configurations to store the data.

Currently, this model is a set of empirical rules, biased by the experience of a poor database PhD student. Just like what’s happening everywhere else in the world, we can replace the set of rules with a model, maybe even a large language model. I believe this can be a very interesting research direction going forward.

Does it work?

I picked 7 files (somewhat randomly) from HuggingFace’s datasets and ran the Parquet Linter (Level 1) on them.

We track two metrics separately:

decode time (ms): time to convert a Parquet file into ArrowRecordBatchesfile size (MB): size of the Parquet file on disk

Even with just simple heuristics from Level 1, Parquet Linter can achieve significant reduction in both file size (up to -19.2%) and decode time (up to -29.8%). Level 2 and 3 are currently on the roadmap. With the collective wisdom of the community, I’m sure we can do much better.

File Size Leaderboard (MB, lower is better)

| File 0 | File 1 | File 2 | File 3 | File 4 | File 5 | File 6 | Total | |

|---|---|---|---|---|---|---|---|---|

| HuggingFace default | 171.38 | 137.88 | 105.55 | 88.78 | 145.97 | 62.59 | 247.95 | 960.10 |

| parquet-linter | 171.35 (-0.02%) | 128.31 (-6.94%) | 90.10 (-14.64%) | 71.70 (-19.24%) | 141.41 (-3.12%) | 58.08 (-7.21%) | 244.73 (-1.30%) | 905.68 (-5.67%) |

Decode Time Leaderboard (ms, lower is better)

| File 0 | File 1 | File 2 | File 3 | File 4 | File 5 | File 6 | Total | |

|---|---|---|---|---|---|---|---|---|

| HuggingFace default | 243.59 | 302.44 | 272.20 | 27.34 | 285.08 | 84.62 | 377.25 | 1592.53 |

| parquet-linter | 237.92 (-2.33%) | 212.03 (-29.89%) | 213.47 (-21.58%) | 38.43 (+40.56%) | 205.84 (-27.80%) | 51.36 (-39.31%) | 322.11 (-14.61%) | 1281.15 (-19.55%) |

Benchmark parquet-linter

You can verify the results by running the benchmark yourself:

cargo build --release --package parquet-linter-leaderboard

./target/release/parquet-leaderboard --from-linter --iterations 3